By Raj Krishnamurthy, CEO, Freespace

For some, the return to the office cannot come soon enough. Homeworking has been a reasonable compromise but issues such as a lack of social interaction and inadequate office space have made working life difficult. The return to work will not be a simple process though. Covid-19 case numbers are rising again so strong precautionary measures need to be in place. The government has issued guidelines from which to build a strong safety strategy. The guidelines include staggering arrival and departure of employees, reducing workplace congestion, providing more storage, using one-way systems, and providing sanitation facilities. The challenge for building managers will be planning and implementing these measures effectively. Technology can streamline this process.

Ensuring hygiene

Many workplaces have been moving towards becoming smart buildings for some time. Sensors and data-driven insights make the office more efficient. Now, these same technologies will be used to manage the office in the post-Covid era. Areas used by multiple people pose a high risk of transmitting the virus. By tagging individual spaces and desks, the use of these areas can be tracked. This may be a matter of using occupancy data or a ‘check-in’ system.

A ‘check-in’ system can be implemented using micro-location technology. Each desk or workspace can be tagged with a QR code that users scan on their phone so when an employee uses the desk, it is listed as occupied. When the employee is finished, they can check-out of the desk in the same way. Cleaning staff will then know to sanitise the area. Once they have done so, staff can then scan the QR code, letting the system know that the desk is clean and available for use. As well as keeping staff safe, this system can save time by showing desk availability as employees arrive at the office, so time is not wasted searching for a sanitised and socially distanced space. In addition, a track and trace system can be built in.

As the workplace continues to adapt, the precautions taken may also need to change. Many buildings are unlikely to be reoccupied fully this year. Employees may be working in split-shifts and certain areas of the building may be closed for months to come. Protocols may change over the months according to risk. To ensure the necessary measures are easily understood and followed, up to date signage will be necessary. Technology is useful here, too. Digital platforms allow up to date information to be available instantly for all staff. This includes current precautions, information on which areas of a building are accessible, and updated information to reassure staff that their managers are well-informed and taking all necessary precautions. These signs can also play a role in the desk ‘check-in’ system; a digital sign can show, in real-time, which desks are available and how to get to them if a one-way system is in place.

Regularly changing signs is also an effective way to ensure they do not go unnoticed. Small, habitual things such as regularly washing one’s hands and refraining from touching one’s face can so easily be forgotten so regular reminders are especially important. Research by Intel has also found that digital signage captures 400% more views than static signage so can make a significant difference to workplace safety.

Raj Krishnamurthy

Using occupancy data

The most obvious use for occupancy sensors is to determine how many are in the building. Safe occupancy levels for buildings will be far lower in the coming months due to social distancing. Occupancy data can be used to ensure the safe level is not exceeded.

Occupancy sensors have been growing in popularity over the past few years. With a single desk in London coming in at over £15,000 a year, failing to properly optimise space can be costly. While this is still true in the post-Covid era, how we optimise space looks very different. Social distancing requires space to be used at a far lower density. For some offices, this means being open for longer hours or over the weekend to allow for split-shift and staggered working. Occupancy sensors can be used to help determine which additional hours are most valuable and how much office space needs to be open during extended hours.

Under-desk occupancy sensors can be combined with the check-in system. This ensures that desk-use is recorded, even if staff forget to scan the QR code at their desk. The data collected can inform cleaning staff how quickly desk turnover occurs and prioritise sanitisation accordingly. Sanitation can be maintained at high levels while the QR code becomes a means of assurance to the higher risk employees that can scan to check cleanliness before use. Sensors in shared areas of the office will record use. The areas used the most are high-risk touchpoints that require regular deep-cleans. Far more regular and intense cleaning is needed in the current circumstances. Without prioritising, cleaning staff and resources will quickly become over-stretched. Using data insights to direct the cleaning regime is a means of preventing this from happening.

Allaying fears

It is also important to reassure employees on their return to the office. The past 6 months have been stressful for everyone and the return to the office may cause further anxiety. By demonstrating to staff that they are well informed and taking all necessary precautions, facilities management can allay the fears of those returning. Employees will be more comfortable in an environment that is clearly being regularly sanitised and ‘hygiene anxiety’ need not become a distraction from work.

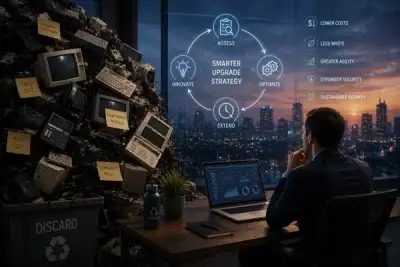

Workplace technology has long been evolving to create more efficient and user-friendly workplaces. These features are still very much in demand but the way we approach them has changed. Pre-existing technologies are demonstrating their flexibility as they are turned to address the challenges of Covid-19. Whether the virus is managed with a vaccine or remains within our population for years to come, these technologies will continue to prove their worth in the post-Covid era.