digna has released version 2026.04 of its data quality and observability platform, introducing enhanced time-series analytics and validation capabilities designed to improve how financial institutions monitor and understand complex data environments, according to a recent platform update.

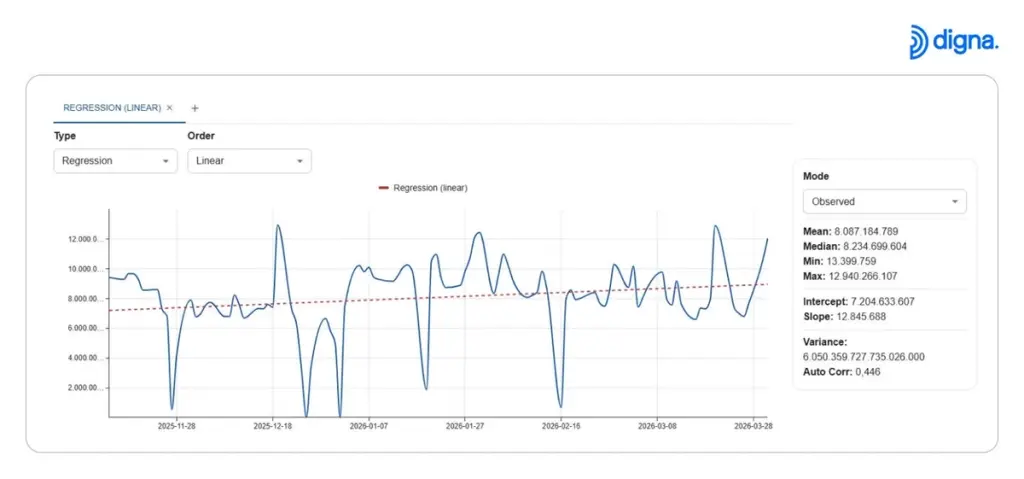

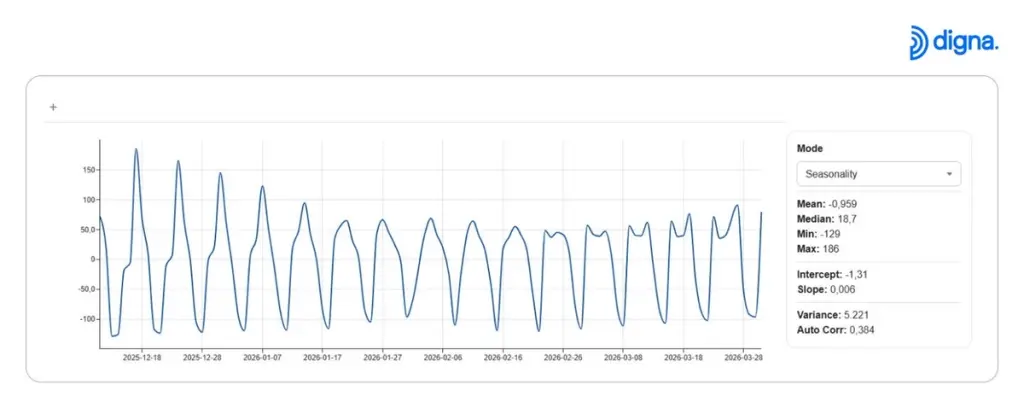

The update introduces a new analytics chart that enables users to analyze time-series data directly within the platform, without requiring dedicated data science resources or external tools. Built-in methods include regression models, smoothing techniques, quantile analysis, and automated identification of trends, seasonality, and pattern changes.

In financial environments where data volumes are high and reporting cycles are continuous, understanding how data behaves over time is critical. Across the financial services industry, institutions are increasingly investing in data observability and advanced analytics tools to manage growing data complexity and ensure operational accuracy.

According to McKinsey, organisations that effectively leverage data and analytics are significantly more likely to improve operational efficiency and performance (https://www.mckinsey.com/capabilities/mckinsey-analytics/our-insights).

However, advanced analysis often depends on specialist teams or custom tooling. According to digna, the new functionality allows business and data teams to explore patterns, identify anomalies, and investigate deviations without relying on Python-based workflows or separate analytical systems.

Example of time-series analysis highlighting transaction trends and deviations using regression models.

The platform automatically generates time-series views for datasets, allowing teams to observe changes in transaction data, reporting metrics, or operational indicators as they evolve. This capability is particularly relevant for financial institutions managing regulatory reporting, fraud detection signals, or performance metrics that require consistent monitoring.

Detection of recurring seasonal patterns in financial data, supporting better interpretation of cyclical behavior.

In addition to analytics improvements, the release introduces enhancements in data validation standardization. Organizations can now define centralized enumerations, such as country codes, transaction types, or status values, and apply them consistently across datasets and systems. This helps ensure that data values remain aligned across reporting layers and reduces discrepancies in downstream analysis.

The platform also introduces reusable validation rule templates, allowing financial institutions to standardize common validation logic across multiple datasets. These rules are executed directly within the source database, eliminating the need to move sensitive financial data for validation purposes.

Another addition in the release is the introduction of statistic-level relevance conditions. This feature allows teams to control when specific metrics should be considered relevant, helping reduce noise in monitoring systems and focus attention on meaningful deviations.

The combined capabilities are intended to support financial organizations in maintaining consistent, reliable, and interpretable data environments, particularly as data architectures grow more complex and distributed.

Financial institutions increasingly face challenges in balancing data governance, operational efficiency, and scalability. According to Deloitte, data complexity and governance challenges continue to increase as organisations scale digital operations and adopt advanced analytics systems (https://www2.deloitte.com/global/en/pages/technology/articles/data-modernization.html). The release reflects a broader shift toward enabling teams to not only detect issues in data but also understand how and why data behavior changes over time. The World Economic Forum also highlights that improved data transparency and analytics capabilities are becoming critical for organisations operating in increasingly complex digital environments (https://www.weforum.org/reports).