Trading

Financial analytics and the use of big data

Published by Gbaf News

Posted on July 18, 2013

· Last updated: February 27, 2019

Related Articles

The Hidden Mechanics of Trading: What Really Drives Market Decisions

The Invisible Force Behind Every Great Trader: Why Curiosity May Matter More Than Strategy

The Invisible Edge: Why Trading Outcomes Are Shaped Beyond the Chart

The Invisible Current: What Really Moves Trades Before They Happen

The Quiet Forces of Trading: What Moves Markets When No One Is Looking

More from Trading

Explore more articles in the Trading category

The Strange Truth About Trading That Most People Discover Too Late

The Unseen Flow: Why Trading Is Driven by Forces You Don’t Track

Why Do Traders Keep Watching the Market Even When They’re Not Trading?

Why Do Some Traders Stay Calm While Others Panic? The Hidden Psychology Behind Market Decisions

Trading Sphere: Structuring a Modern Approach to Online Trading

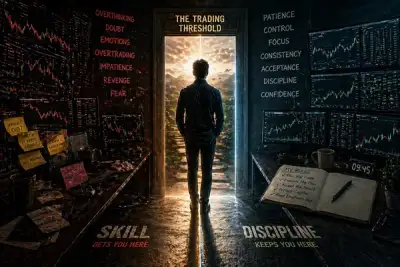

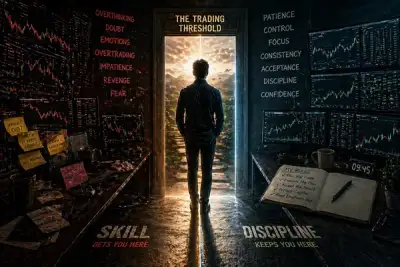

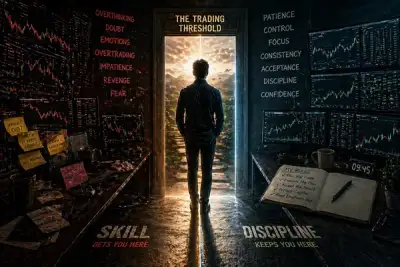

The Trading Threshold: The Point Where Skill Stops Helping and Discipline Takes Over

Why Traders Often Miss the Obvious—Until It’s Too Late

The Trading Threshold: The Point Where Skill Stops Helping and Discipline Takes Over

The Trading Paradox: Why Doing Less Often Leads to Better Results

The Trading Blind Spot: Why What You Don’t Notice Often Matters Most

The Risk That Doesn’t Look Like Risk

The Market Mirror: What Trading Reveals About Decision-Making Under Pressure